This is Article 03 of 03 in the Beyond the Container series — three articles for engineering leaders navigating the post-Kubernetes era.

Here is a counterintuitive prediction worth taking seriously: the first generation of AI-agent-managed infrastructure will cost more to run than what you have today. Not because AI is inefficient. Because AI agents will provision, adjust, and optimize at a granularity and speed no human team can match — and in the early phases, some of those decisions will be suboptimal as the models learn from your specific environment.

The "AI reduces costs immediately" narrative misses this. Cost reduction comes, but not first. First comes expanded operational coverage, then the gradual optimization that transforms that coverage into superior economics. The organizations that accept a short-term learning-phase cost premium will emerge with infrastructure that continuously self-optimizes toward a Pareto frontier of cost, performance, and carbon efficiency that no human ops team could sustain at scale.

The analogy that holds here is algorithmic trading. Early algorithmic strategies broke even or lost money until feedback loops tightened and models learned from live data. The organizations that invested through the learning phase built the systems that define market microstructure today. Infrastructure autonomy follows the same pattern.

"The most expensive infrastructure decision you can make right now is to wait for AI-native operations to be 'enterprise ready.' By the time it looks enterprise-ready, your competitors will have completed their learning phase."

Three Properties of Infrastructure That Thinks

Property 1: Continuous Autonomous Provisioning

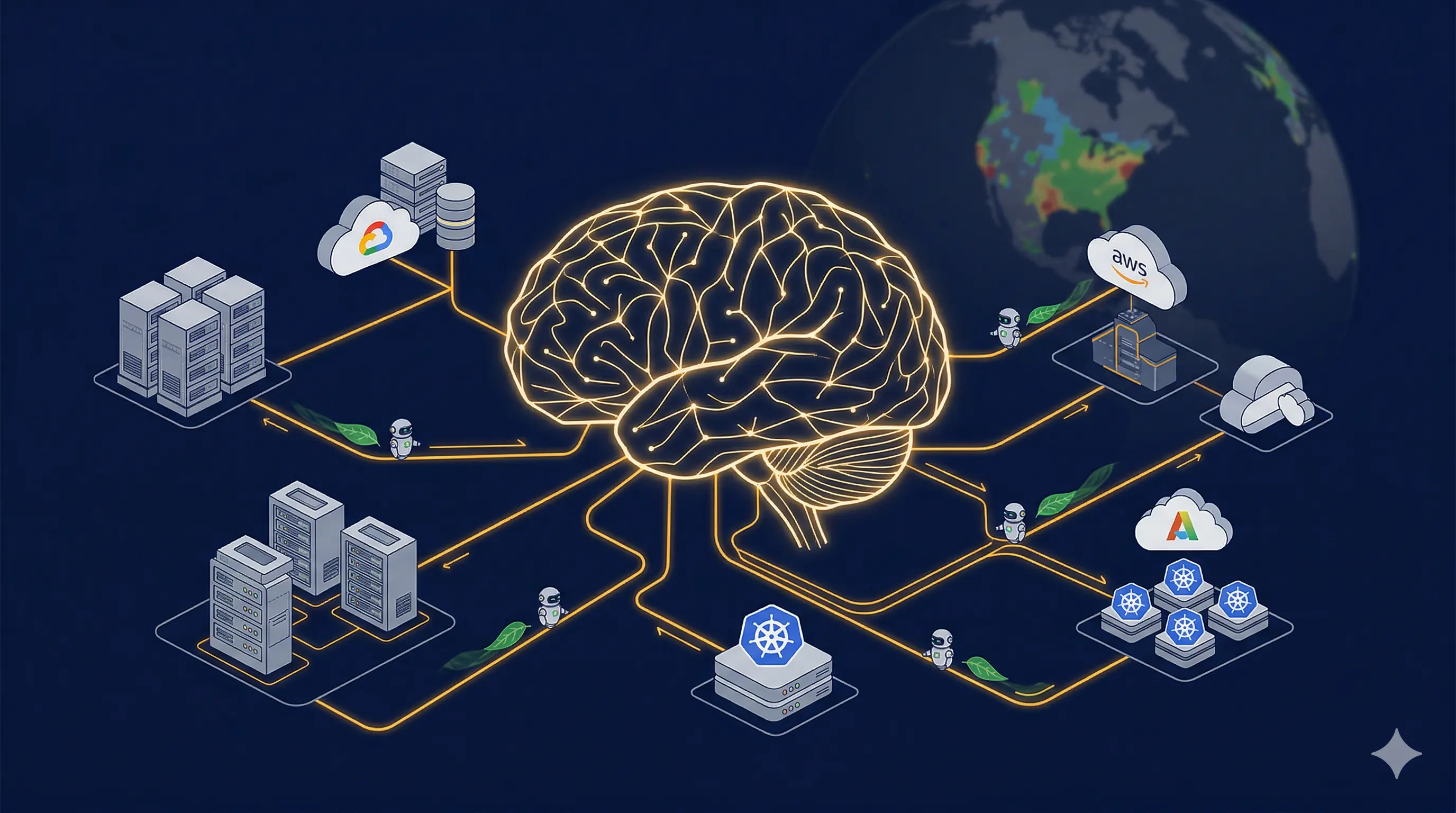

AI agents can close the loop between observability signals and infrastructure mutations without human approval for pre-classified change types. The GitOps workflow remains intact. The agent becomes the PR author. Every infrastructure change is still reviewed, version-controlled, and auditable. The difference is who initiates it.

The governance model that works in practice uses a three-tier classification. Fully autonomous for pre-approved patterns: scaling events, certificate rotation, autoscaling parameter adjustments. AI-proposed with human approval for novel change patterns. Human-initiated only for core security and governance configuration. The agent operates freely in tier one, learns from tier two, and is never in scope for tier three.

This is exactly the model implemented in lowtouch.ai's SRE Agent and FinOps Agent, which are deployed inside customer infrastructure. Agents operate with human-in-the-loop (HITL) controls, full thought-logging for auditability, and pre-classified change taxonomies that the customer defines. Governed autonomy, not unchecked automation.

Building that governance framework is a change management program, not a technology deployment. It requires explicit risk appetite from leadership, a change classification taxonomy developed with engineering, security, and compliance stakeholders, and a culture that treats "the agent made this change" as equally accountable as "an engineer made this change."

Property 2: Carbon-Aware Scheduling as a First-Class Constraint

The carbon intensity of cloud regions varies significantly by time of day and energy mix. Optimizing for carbon also optimizes for cost, because carbon-light hours are disproportionately off-peak hours when spot and preemptible instance prices are lowest. The Green Software Foundation's Carbon Aware SDK and the Cloud Carbon Footprint project make it technically straightforward to query real-time marginal carbon intensity for any major cloud region.

Organizations that integrate this signal into their batch workload schedulers — routing training jobs, data pipelines, backup operations, and batch reporting to run when and where the grid is greenest — are simultaneously reducing emissions and reducing cloud spend.

| Traditional Scheduling | Carbon-Aware Scheduling |

|---|---|

| Fixed scheduling (cron-based) | Carbon-aware, dynamic scheduling |

| Carbon intensity: uncontrolled | Carbon intensity: minimized by design |

| Cost: standard on-demand pricing | Cost: 15–30% reduction (off-peak and spot) |

| Emissions reporting: estimated | Emissions reporting: measured and auditable |

| Regulatory readiness: reactive | Regulatory readiness: proactive |

For AI workloads specifically, large model training runs are among the most carbon-intensive workloads in enterprise computing. A training run scheduled with carbon awareness — running the most compute-intensive phases during high renewable generation periods — can reduce both the carbon footprint and the cost of AI model development without sacrificing model quality.

Property 3: Composable, Reconfigurable Hardware Fabrics

Composable infrastructure — disaggregated CPU, GPU, memory, and storage pools connected by CXL interconnects and orchestrated by management software — means that the hardware configuration of a node is no longer fixed at purchase time. An AI agent that can not only schedule workloads across existing nodes but also assemble the node configuration a workload requires — requesting 2TB of CXL-attached memory for a large model inference run and releasing it when done — represents a fundamentally different operational model from anything Kubernetes contemplates.

HPE GreenLake, Liqid's composable fabric, and the emerging CXL 3.0 memory pooling standard are the leading indicators of where this goes. Organizations building private and hybrid cloud infrastructure today that incorporate composable principles are laying the hardware substrate that AI-native autonomous operations will require.

The Experiment That Pays for Itself

Carbon-aware batch scheduling is the lowest-risk, highest-signal entry point into this way of thinking. Here is a specific experiment you can run in 30 days:

- Identify your three largest recurring batch workloads: data pipelines, model training jobs, backup operations.

- Integrate the Green Software Foundation's Carbon Aware SDK with your job scheduler (Airflow, Argo Workflows, AWS Step Functions).

- Configure each workload to run within a 4-hour execution window, with the SDK selecting the lowest-carbon hour within that window.

- Run for 30 days. Measure cloud cost delta, carbon reduction in kg CO2e, and execution latency impact.

- Use the results to build the business case for the next tier: autonomous scaling decisions with a combined cost and carbon objective function.

The data from this experiment does three things simultaneously: demonstrates cost reduction, provides measurable carbon data for ESG reporting, and builds organizational muscle for constraint-aware autonomous scheduling — which is the foundational capability for AI-agent-managed infrastructure.

Where CloudControl and lowtouch.ai Fit In

Our lowtouch.ai platform is a private, no-code Agentic AI platform for enterprises. It deploys entirely inside your infrastructure. Your data never leaves. It connects to your existing enterprise systems, observability stack, ITSM platforms, cloud APIs, and financial systems — and turns those connections into governed, auditable AI agents without requiring an AI engineering team to build them.

The pre-built agent catalog includes the SRE Agent for autonomous incident detection and resolution, the FinOps Agent for cloud cost optimization, the Migration Agent for TCO and risk analysis that accelerates migration decisions by 40%, and the Compliance Agent for regulatory automation across ISO 27001, SOC 2, GDPR, and RBI frameworks. Agents are deployed in 4 to 6 weeks and are customizable without writing code.

The organizations that begin building AI-agent operations capabilities now — accepting the learning-phase complexity — are those that will complete their optimization cycle while competitors are still evaluating pilots. This is not a 2027 problem to solve in 2027. The build window is now.

The challenge worth taking seriously: Carbon-aware scheduling reduces cost, reduces emissions, and demonstrates AI-augmented operations capability in a single 30-day experiment. If you cannot get organizational alignment on a project that accomplishes all three, the cultural readiness problem is more urgent than the technology readiness problem.

lowtouch.ai: Production-grade AI agents deployed inside your infrastructure. No data leaves. No code required. From idea to production in 4–6 weeks. Governed autonomy with full observability and human-in-the-loop controls throughout. Explore lowtouch.ai at ecloudcontrol.com

Infrastructure architecture is not purely a technical decision. It is an organizational commitment about what kind of engineering organization you intend to be, and what kind of technology future you are building toward.