Enterprise cloud migration has never been a pure infrastructure decision. But somewhere between the business case and go-live, it starts to feel like one. Workloads move. Costs rise. Operations remain largely manual. The expected ROI compresses. Most enterprises have experienced this cycle at least once. The question is not whether cloud transformation delivers value; it is why the delivery model keeps getting in the way.

The Problem Most CXOs Recognize but Rarely Name

The structural failure in most cloud migrations is not technical. It is operational. Teams invest heavily in getting workloads moved and almost nothing in making those workloads run autonomously after go-live. The result is a Kubernetes estate that generates dashboards but still requires humans to act on every alert, every anomaly, and every cost spike.

Add to that the estimation risk: the 2024 Flexera State of the Cloud report found enterprises waste an average of 28% of their cloud spend. For large organizations, that number compounds fast. The tooling to address it exists. The problem is integration, governance, and time to production.

What has changed is the availability of a structured, production-grade answer that does not require a 12-month custom build or a specialized AI engineering team to run it.

| Metric | Outcome |

|---|---|

| Migration Velocity | Up to 5x faster |

| Lower Cloud TCO | Up to 30% reduction |

| Idea to Production Agent | 4–6 weeks |

Kubernetes-First Migration: Repeatability as a Competitive Advantage

Container orchestration is now the operational baseline for enterprise cloud-native workloads. The question is no longer whether to containerize — it is how to do it at speed and scale without rebuilding delivery capacity for every engagement.

Cloud Control's AppZ platform solves this with a migration factory model. Over 150 CIS-hardened, vulnerability-scanned infrastructure-as-code templates cover the most common enterprise stacks across Java, .NET, Python, Kafka, Redis, Oracle, and more. A cloud landing zone is provisioned in under a week. Discovery and a fixed-price quote are delivered in four to eight hours. The methodology is a structured seven-phase process from CMDB review to managed SRE go-live, repeatable across tens or hundreds of workloads per year.

AppZ supports lift-and-shift, replatforming, and refactoring strategies, selected during technical review based on workload data. Policy-as-code, configuration-as-code, and IaC are baked into every template. Compliance controls are structural, not retrofitted. Alignment with PCI DSS, HIPAA, GDPR, ISO 27001, and SOC 2 is standard.

For CXOs, the relevant outcome is this: predictable scope, fixed-price economics, and production SLAs in weeks, not quarters, across a hyperscaler-neutral, air-gapped-capable footprint spanning AWS, Azure, GCP, Oracle Cloud, OpenShift, and Rancher.

GitOps and DevSecOps: Governance Built Into Delivery

GitOps has moved from architectural best practice to production engineering standard. Git as the single source of truth for both application and infrastructure state means every deployment is declarative, every change is version-controlled, and every rollback is a deterministic operation. AppZ implements end-to-end GitOps across GitHub, GitLab, BitBucket, Azure DevOps, and AWS CodeCommit, with automated image scanning, signing, and deployment strategies (rolling, blue/green, recreate) per workload.

Security is shift-left by design. Hardened base images, zero privileged access, breakglass access model, drift prevention, SIEM integration, and continuous compliance posture checks are standard. For regulated industries, this is the baseline expectation. The platform is built to satisfy it from the ground up.

Agentic AI Operations: From Observability to Autonomy

Post-migration is where the value gap typically appears. The infrastructure is running. The observability stack is generating data. But the gap between signal and action is still filled by human effort at scale. This is the operational problem that Agentic AI is built to close.

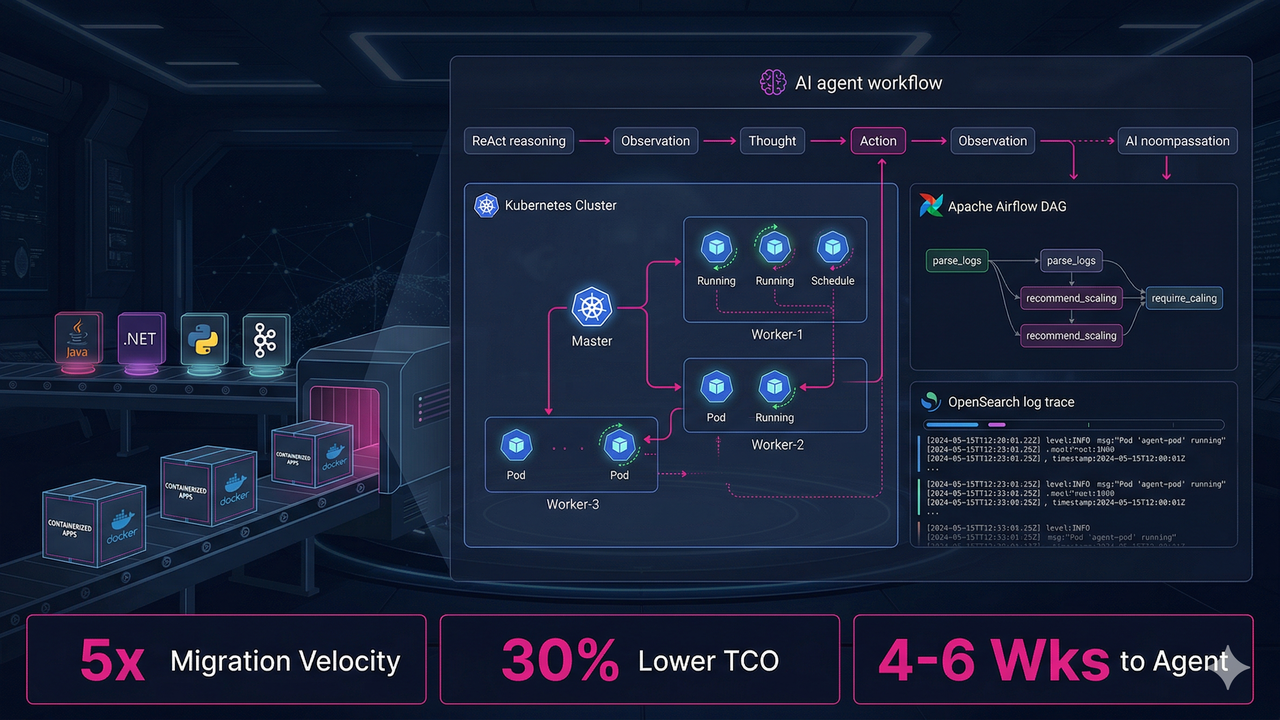

lowtouch.ai is a private, no-code Agentic AI platform that layers on top of AppZ's Kubernetes infrastructure to make cloud operations self-healing, cost-intelligent, and governed from day one. Agents run on ReAct and CodeAct frameworks, orchestrated via Apache Airflow DAGs, with full thought-logging through OpenSearch, Prometheus, and Grafana. Every agent runs entirely inside the customer's infrastructure. No data leaves the perimeter.

For enterprises operating under RBI, GDPR, HIPAA, or PCI DSS, "private AI" is not a feature. It is a prerequisite. The lowtouch.ai architecture satisfies it structurally, not as an add-on.

The lowtouch.ai Technical Architecture

Every agent is built on a production-grade, OpenAI-compatible modular stack. Multi-LLM support (Llama, Claude, Gemini, Nemotron) hosted privately means no vendor lock-in at the model layer. Apache Airflow DAGs provide auditable, retry-capable orchestration. Vector databases deliver contextual memory across long-running workflows. Thought-logging makes every agent decision transparent and audit-ready.

Tech stack: ReAct + CodeAct Frameworks · Apache Airflow Orchestration · Private LLM Hosting · RAG Pipelines · Vector Database Intelligence · OpenSearch Thought-Logging · Human-in-the-Loop (HITL) · Air-Gapped Deployment

Pre-Built Agents: From Idea to Production in 4–6 Weeks

The pre-built agent catalog eliminates the 6–12 month custom AI build cycle. Each agent is customizable, compliance-ready, and integrated with existing enterprise systems from day one.

SRE Agent — 50% lower MTTR. 35% less downtime. Real-time Kubernetes monitoring, AI-driven root-cause analysis, autonomous incident remediation, predictive autoscaling, and IAM posture checks. Integrates with Prometheus, Grafana, CloudWatch, Jira, and ServiceNow.

FinOps Agent — Up to 60% reduction in cloud overspend. Real-time spend visibility, rightsizing, reserved-instance optimization, tagging enforcement, and cost anomaly detection across AWS, Azure, and GCP. CFO-ready dashboards with chargeback reporting.

Migration Agent (AppZ) — 40% faster decisions. 65% less estimation effort. AI-powered TCO/ROI modelling, dependency mapping, migration risk scoring, Airflow-orchestrated ETL, and checksum-verified cutover. Executive-ready migration business case dashboards.

Help Desk Agent — 80% tickets auto-resolved. NLP-driven cognitive triage, autonomous L1/L2 resolution, and smart escalation with full context. Integrates with ServiceNow, Jira, Confluence, and SharePoint via private RAG pipelines.

Architecture Summary: What Enterprises Are Actually Buying

| Layer | Technology | Enterprise Outcome |

|---|---|---|

| Migration Factory | AppZ, 150+ IaC templates, 7-phase methodology | Predictable scope, fixed-price economics, weeks not months |

| Agent Frameworks | ReAct, CodeAct | Autonomous reasoning with auditable execution steps |

| Orchestration | Apache Airflow DAGs | Retry logic, lineage tracking, full workflow observability |

| LLM Hosting | Llama, Claude, Gemini, Nemotron (private) | No vendor lock-in, no data exfiltration |

| Observability | OpenSearch, Prometheus, Grafana + Thought-Logging | Full agent reasoning transparency, audit-ready |

| Governance | HITL controls, RBAC, approval workflows | Agents pause at critical gates; approvals via Slack, Teams, email |

| Compliance | ISO 27001, SOC 2, GDPR, PCI DSS, HIPAA, RBI | Audit-ready from day one, not retrofitted |

The Outcome-Based Model

Outcome-based contracts are available. Billing tied to successfully migrated workloads and measurable operational KPIs — rather than time and materials — is only viable when the delivery model is standardized and observable enough to commit to results. Pre-tested IaC templates and pre-built agents create that predictability. It is a signal worth paying attention to.

For CTOs and CIOs evaluating cloud transformation programs, the practical takeaway is straightforward: the operational model that treats migration and post-migration AI operations as separate, sequential programs is being replaced by an integrated, governed delivery model that delivers production outcomes in weeks and measurable ROI within the first quarter of go-live.

Start with a Technical Discovery Session. Production in 4–6 Weeks.

Fixed-price migration quote in 4–8 hours. AI agent in production in 4–6 weeks. Outcome-based contracts available.

- Email: info@ecloudcontrol.com

- Website: www.ecloudcontrol.com | www.lowtouch.ai